A cybersecurity SaaS company came to us after burning through two AI writing tools. Their verdict on the previous solutions: "Obvious ChatGPT-style content that required manual editing on every single email." The reps had stopped using both tools within six weeks.

When they tested signal-grounded messaging, the response was immediate: "Quality significantly better than typical AI-generated content. First message particularly impressive."

The difference was not better prompts. It was better data.

Everyone has access to ChatGPT, Claude, Gemini, and a dozen sales-specific AI writers. Your prospects have the same access. They know what AI-generated outreach looks like because they use the same tools to write their own marketing copy. The bar for AI sales messaging just moved from "personalized" to "provably informed."

TL;DR: Most AI sales emails fail because they personalize from CRM data, not real company intelligence. The fix is grounding every message in specific, cited signals: earnings call quotes, leadership changes, hiring patterns, and strategic initiatives. Teams doing this see 15-25% reply rates compared to the 3.43% average. Email copy accounts for only about 30% of outreach effectiveness. The other 70% is data quality, deliverability, and sequencing. Better inputs produce better outputs, every time.

Why Your Prospects Can Spot AI Emails Instantly

The problem with most AI-generated sales emails is not that they sound robotic. Modern LLMs produce perfectly fluent prose. The problem is that they sound generic in a specific, recognizable way.

Here is the pattern prospects have learned to recognize:

- Vague company reference. "I noticed your company is growing fast" or "Congratulations on your recent momentum." These phrases signal that the sender knows nothing beyond a LinkedIn profile.

- Template structure. Compliment, pain point assumption, vague value prop, calendar link. Every AI email tool produces this skeleton.

- Absence of evidence. No specific data point, no cited source, no reference to anything the company actually said or did. The email could apply to 500 other companies with a single variable swap.

Prospects do not consciously analyze these patterns. They just feel it. The email registers as "another automated pitch" and gets archived in under three seconds.

The average cold email reply rate is 3.43%. That number has barely moved despite massive AI adoption across sales teams. More teams are sending AI emails, but the emails are not getting better because the inputs have not changed. Everyone is optimizing the prompt when they should be optimizing the data feeding the prompt.

The Quality Equation Most Teams Get Wrong

AI messaging quality follows a simple formula:

Output quality = Input data quality x Prompt specificity x Personalization depth

Most AI sales tools optimize for prompt specificity. They fine-tune templates, test subject line variations, and A/B test call-to-action phrasing. This matters, but it is only one variable in the equation, and not the most important one.

Email copy accounts for roughly 30% of outreach effectiveness. The remaining 70% comes from data quality (are you referencing real, timely intelligence?), deliverability (does the email reach the inbox?), and sequencing (is the timing right relative to a trigger event?).

The real differentiator is input data quality. Consider the difference:

Generic AI input: Company name, industry, employee count, prospect title, recent funding round.

Signal-grounded AI input: CEO quoted "sales transformation initiative" on last quarter's earnings call. Company hired 12 new enterprise AEs in the past 60 days. CFO mentioned "consolidating our tech stack from nine tools to four" during investor Q&A.

Feed the first input to any AI tool, and you get: "I noticed your company is growing and investing in sales. I'd love to show you how we can help."

Feed the second input, and you get: "Your CFO mentioned consolidating from nine tools to four on the Q3 call. We helped a similar team cut from seven to two while keeping the data layer intact. Worth comparing notes?"

Same AI model. Same prompt template. Completely different response from the prospect. The difference is entirely in what the AI knew before it started writing.

“The talking points are gold. If they're in Salesmotion, I know they're being discussed inside that business. That makes it easy to spark a real conversation, which is 90 percent of the battle.”

Andrew Giordano

VP of Global Commercial Operations, Analytic Partners

Before and After: Generic vs. Signal-Grounded

Here is what the gap looks like in practice.

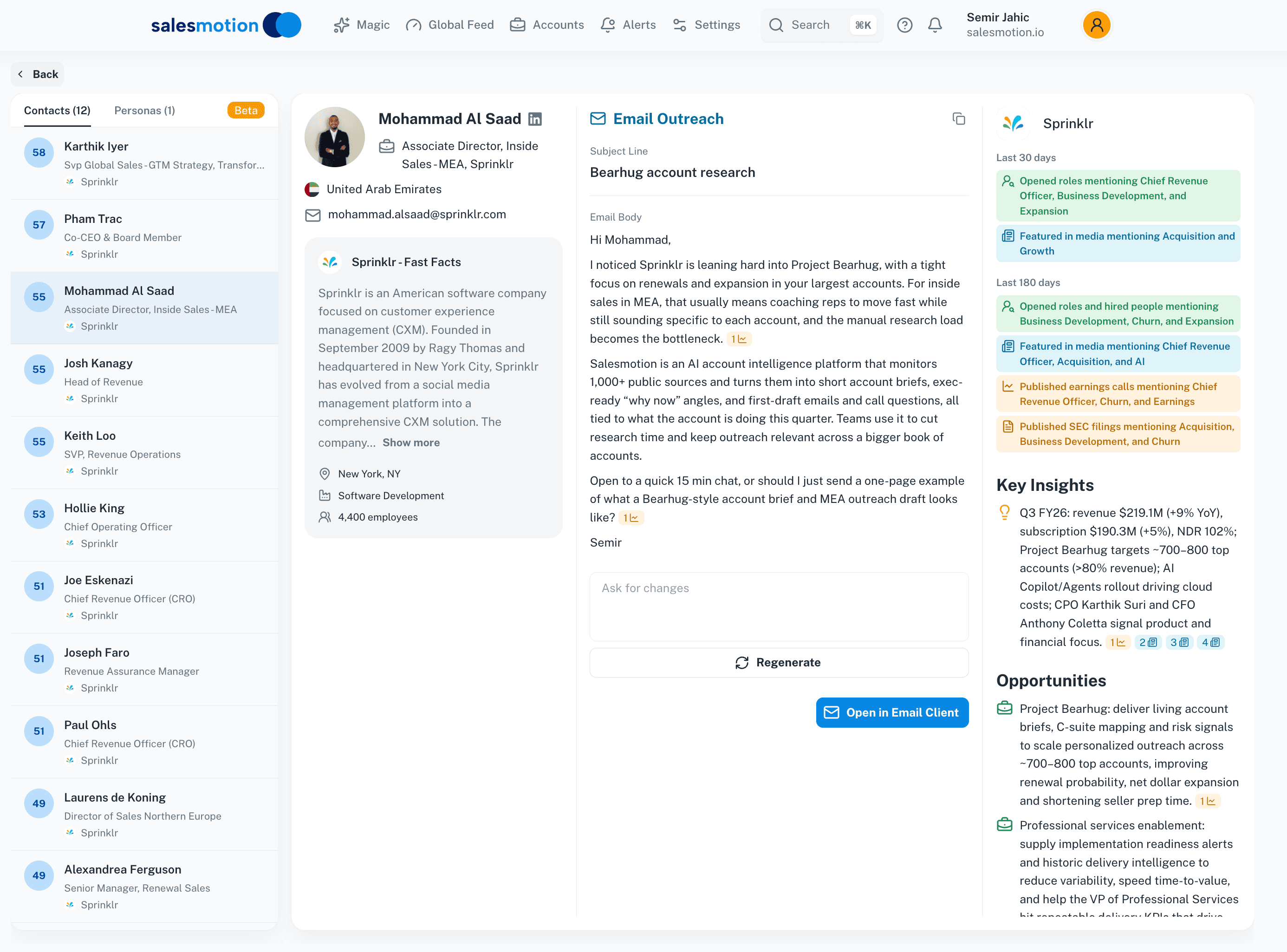

Generic AI email:

Hi Mohammad, I came across Sprinklr and was impressed by your growth in the CXM space. As Associate Director of Inside Sales, I imagine you are always looking for ways to help your team work more efficiently. We help sales teams like yours close more deals with less manual effort. Would you be open to a 15-minute call this week?

Signal-grounded email:

Hi Mohammad, I noticed Sprinklr is leaning hard into Project Bearhug, with a tight focus on renewals and expansion in your targeted accounts. For inside sales in MEA, that usually means coaching reps to move fast while still sounding specific to each account, and the manual research load becomes the bottleneck. Teams use us to cut research time and keep outreach relevant across a bigger book of accounts. Open to a quick 15-min chat, or should I just send a one-page example of what a Bearhug-style account brief and MEA outreach draft looks like?

Signal-grounded outreach drafted from real account intelligence, including project details and strategic context.

Signal-grounded outreach drafted from real account intelligence, including project details and strategic context.

The second email references a specific internal initiative by name. It connects that initiative to the prospect's actual role and geography. It offers something concrete (an account brief example) instead of a generic meeting request. A prospect reads this and thinks: "This person actually knows what we are working on."

The data behind the second email came from the company's earnings call, parsed and mapped to the prospect's responsibilities:

Earnings call data automatically parsed into structured insights, giving the AI specific facts to reference.

Earnings call data automatically parsed into structured insights, giving the AI specific facts to reference.

This is what teams using signal-based selling mean when they talk about "letting the data write the email." The AI is not guessing what the prospect cares about. It is referencing what their own leadership said publicly.

What Enterprise Buyers Actually Want to See

A top-5 CRO with 170+ sales users gave us direct feedback on their experience with AI-generated messaging: the summaries were "not specific enough, not applicable enough" to their actual selling context. The AI produced competent prose that read like it came from a brochure, not from someone who understood the account.

This is the pattern across enterprise sales teams. The complaint is never "the grammar is bad" or "the email is too short." The complaint is always about specificity. Reps need messages that reference the exact initiative, the exact quote, the exact hiring pattern that makes the outreach relevant to this account and no other.

Teams using AI-powered personalization grounded in real signals report 18% reply rates, a 5.2x improvement over the platform average. The difference is not a better writing model. It is a better research layer feeding the writing model.

See Salesmotion on a real account

Book a 15-minute demo and see how your team saves hours on account research.

The Three Ingredients of AI Messages That Work

After analyzing what separates high-performing AI outreach from the noise, three ingredients consistently appear.

1. Real, Cited Data from Primary Sources

The message references something the prospect's company actually said or did, with a traceable source. Earnings call transcripts, SEC filings, press releases, leadership interviews, hiring postings. Not third-party intent data or generic firmographic enrichment.

Why this matters: B2B contact data decays at 2.1% per month. By the time a database entry reaches your CRM, the prospect may have changed roles, the company may have shifted strategy, and the "intent signal" may be six months stale. Primary sources are current by definition.

A GTM advisor we spoke with had built sophisticated prompts for Claude to draft emails from spreadsheet data he maintained manually. His approach worked well for 50 accounts, pulling in earnings data and news to produce personalized outreach. But he was already questioning the scalability: maintaining the spreadsheet, updating the data, running individual prompts for each account. The quality was there. The process was not built for 500 accounts.

This is the core tension with AI-powered cold outreach: the quality of the output scales with the freshness and specificity of the input, but gathering that input manually does not scale.

2. Signal-to-Value Mapping

Citing a signal is necessary but not sufficient. The message must connect the signal to a specific pain point or opportunity the prospect faces, and then connect that to a relevant capability.

The pattern looks like this:

Signal: "Your CEO mentioned a 'sales transformation initiative' on last quarter's earnings call."

Pain point bridge: "That usually means reps are spending more time on admin and research than on actual selling conversations."

Value connection: "We helped a similar team recover 6+ hours per rep per week by automating the account research step."

Without the bridge, the signal reference is just trivia. "I noticed your CEO talked about sales transformation" earns the same response as "I noticed you're in the software industry." The prospect thinks: "So what?"

The bridge is where most AI tools fail because it requires understanding not just what happened at the company, but what that event means for the specific person you are emailing. A CFO cares about the cost implications of a leadership change. A VP of Sales cares about the quota implications. Same signal, different bridge, different email.

3. Rep Voice Calibration

The final ingredient is the hardest to automate: the message should sound like it came from the specific rep sending it, not from a content marketing team or a language model.

This means calibrating for:

- Tone. Some reps are direct and concise. Others are conversational and warm. The AI draft should match.

- Vocabulary. A rep who says "let's compare notes" should not suddenly send an email saying "I'd welcome the opportunity to explore synergies."

- Signature moves. Some reps always open with a question. Others lead with a data point. The AI should learn these patterns.

Salesmotion's Outreach Agent handles this by learning from a rep's sent email history and adjusting the draft accordingly. But even without dedicated tooling, you can improve voice calibration by including 2-3 example emails from the rep in your AI prompt as reference samples.

The 70/30 Rule: Why Copy Is Not King

Sales teams obsess over email copy because it is the most visible part of the outreach process. But copy accounts for roughly 30% of cold outreach effectiveness. The other 70% breaks down into:

- Data quality (30-35%). Are you emailing the right person at the right account at the right time? Are your signals fresh and specific? Is your contact data accurate, or has it decayed past usefulness?

- Deliverability (20-25%). Does the email reach the primary inbox? Domain authentication, sending reputation, email warmup, and list hygiene matter more than subject line optimization.

- Sequencing and timing (10-15%). Are you following up at the right intervals? Are you coordinating across channels (email, LinkedIn, phone)? Is the sequence triggered by a real event or just a calendar cadence?

This is why teams that invest heavily in AI writing tools without fixing their data layer see disappointing results. You can have the best email copy in the world, but if it references stale data, lands in the spam folder, or arrives three months after the trigger event, it will not get replies.

The teams seeing 15-25% reply rates, according to Belkins' outreach benchmarks, are investing disproportionately in the 70%: better signal detection, cleaner contact data, tighter deliverability practices, and smarter sequencing. The email copy is important, but it is the last mile, not the first.

How to Audit Your Current AI Outreach

Before overhauling your tech stack, run this diagnostic on your last 50 AI-generated emails:

- The specificity test. Pick any email at random. Could you swap in a different company name and the email would still make sense? If yes, your personalization is not specific enough.

- The source test. Does the email reference something the prospect's company actually said or did, with a traceable source? "I noticed your company is growing" fails. "Your VP of Engineering posted about migrating to microservices last month" passes.

- The "so what?" test. Does the email connect the signal to a pain point the prospect personally cares about? A data point without a bridge is trivia, not personalization.

- The voice test. Read the email aloud. Does it sound like the rep who is supposedly sending it, or does it sound like a language model? If it sounds like every other AI email, it will be treated like every other AI email.

- The freshness test. How old is the signal referenced? If it is older than 30 days, it has likely been referenced by other vendors already. B2B data decays at 2.1% per month, and signals decay even faster in terms of outreach relevance.

If more than half your emails fail two or more of these tests, the problem is not your AI tool. The problem is what you are feeding it.

Building a Sustainable Signal-to-Message Pipeline

The long-term solution is not better prompts. It is a pipeline that continuously feeds fresh, specific, cited intelligence into your outreach workflow. That pipeline has three layers:

Layer 1: Signal detection. Automated monitoring of earnings calls, leadership changes, hiring patterns, product launches, and regulatory filings across your target accounts. This replaces the manual Google Alerts and LinkedIn stalking that most reps rely on.

Layer 2: Research synthesis. Raw signals are not useful until they are contextualized. An earnings call transcript is 8,000 words. The rep needs the three sentences that matter for their specific value proposition, with a citation they can verify. AI-powered account research compresses hours of reading into seconds of structured output.

Layer 3: Message generation. With fresh signals and synthesized research as inputs, the AI draft is specific, cited, and relevant by default. The rep's review becomes a 30-second quality check instead of a 15-minute rewrite.

Salesmotion connects all three layers into a single workflow: signals are detected across over a thousand sources, synthesized into account briefs with citations, and used to generate outreach drafts calibrated to the rep's voice. But regardless of the tooling, the architecture is the same. Fix the inputs, and the outputs take care of themselves.

Key Takeaways

- AI email quality is a data problem, not a writing problem. The model matters far less than what you feed it. Signal-grounded messages achieve 15-25% reply rates versus the 3.43% cold email average.

- Your prospects recognize AI emails instantly. Vague company references, template structures, and absence of cited evidence are dead giveaways. Specificity is the antidote.

- The 70/30 rule applies. Email copy is only about 30% of outreach effectiveness. Data quality, deliverability, and sequencing account for the rest. Invest accordingly.

- Three ingredients separate good AI outreach from noise: real cited data from primary sources, signal-to-value mapping that bridges data to pain points, and rep voice calibration.

- Audit before you overhaul. Run the five-point diagnostic on your last 50 emails before buying new tools. The bottleneck may be your data layer, not your AI.

Frequently Asked Questions

How do I tell if my AI sales emails actually sound like ChatGPT wrote them?

Run the specificity test: pick any email and swap in a different company name. If the email still reads naturally, it is too generic. Also check for three telltale signs: vague company compliments ("impressed by your growth"), formulaic structure (compliment, pain assumption, pitch, calendar link), and zero cited evidence from the prospect's own company statements. Ask a colleague outside sales to read five of your AI emails alongside five human-written ones. If they can sort them with more than 70% accuracy, your AI emails need better inputs.

What kind of data should I feed my AI tool to produce better sales emails?

Prioritize primary sources over database enrichment. Earnings call transcripts with direct leadership quotes are the highest-performing signal type, followed by executive hiring announcements (new leaders are 10x more likely to adopt new vendors in their first 90 days), strategic initiative disclosures from press releases or investor presentations, and hiring pattern data that reveals department-level priorities. Avoid relying solely on firmographic data (revenue, employee count, industry) since every competitor has the same information. The goal is to reference something your prospect's company said or did that 95% of other sellers have not noticed.

Is it worth building a manual research process, or should I invest in tooling?

It depends on your account volume. If you target fewer than 50 accounts per quarter, a GTM advisor we spoke with proved that manual research combined with well-crafted AI prompts can produce exceptional outreach quality. The challenge is scalability. At 200+ accounts, manual data gathering becomes the bottleneck, and B2B contact data decays at 2.1% per month, meaning your spreadsheet is getting stale faster than you can update it. Platforms like Clay or dedicated signal intelligence tools automate the data layer so your team can focus on the review and send steps. The key metric to watch is time-per-account: if reps spend more than 10 minutes researching before each email, automation will pay for itself quickly.

How many signals should I reference in a single outreach message?

One, occasionally two. The goal is depth, not breadth. A single earnings call quote explored with a clear "so what?" bridge produces a stronger email than three surface-level signal references stacked together. When you reference multiple signals, the email reads like a surveillance report rather than a relevant business conversation. If you have multiple strong signals for the same account, spread them across a multi-touch sequence: earnings quote in email one, leadership change in the LinkedIn touch, hiring pattern in the follow-up. This creates the impression of genuine ongoing attention to the account rather than a one-time data dump.

What reply rate should I realistically expect from signal-grounded AI outreach?

Top-performing teams using signal-based personalization see 15-25% reply rates, compared to the 3.43% cold email average. Your actual results depend on your ICP definition, data freshness, deliverability infrastructure, and follow-up cadence. A realistic progression: teams typically move from 3-5% to 8-12% within the first month of adding signal-grounded personalization, then to 12-18% as they refine their signal-to-value mapping and rep voice calibration. The jump from 3% to 10% usually comes from better data. The jump from 10% to 18% comes from better execution on sequencing, timing, and multi-channel coordination.